When it comes to autoscaling in Kubernetes, most people know about the basics - scale up when traffic spikes, scale down when it’s quiet. But behind the scenes, Kubernetes is running a finely coordinated operation with multiple moving parts.

Understanding how autoscalers work together - and what actually happens under the hood when your app scales - is key to building reliable, responsive systems.

Let’s dive into how Kubernetes handles both application-level and infrastructure-level scaling, and walk through the real-world chain of events that gets your pods running when the heat is on.

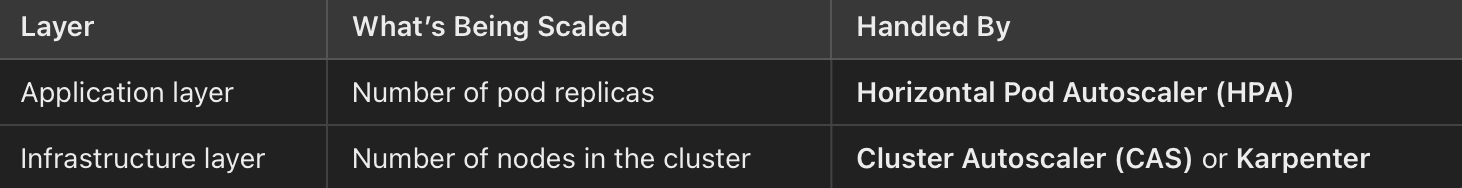

Scaling at Two Levels

Kubernetes doesn’t just scale pods. It has to make sure your applications can handle the load and your cluster has the capacity to run everything.

How Autoscaling Really Works

- App load increases

Let’s say your application suddenly gets more traffic, maybe it’s a product launch or just peak usage hours. The CPU usage on your pods starts to rise.

- HPA adjusts the replica count

The Horizontal Pod Autoscaler kicks in. It monitors resource usage (like CPU or memory) and sees that demand is climbing. Based on its configuration, it increases the replicas value in your Deployment (or StatefulSet, etc.).

- Deployment creates new pods

The Deployment controller notices the updated replica count and begins spinning up the additional pods needed to meet demand.

- Pods go into Pending state

Now here’s the catch: Kubernetes tries to schedule these new pods onto your existing nodes… but there’s not enough room. Maybe the cluster is already maxed out, or the pods have high CPU or memory requests.

These new pods enter a Pending state - they’re waiting for space to become available.

- CAS or Karpenter reacts

This is where Cluster Autoscaler (CAS) or Karpenter steps in:

👉🏻 CAS sees unschedulable pods and decides to scale the node group. It’s conservative and takes a bit more time to act.

👉🏻 Karpenter, on the other hand, is faster and smarter, it dynamically provisions new nodes based on pod requirements (like instance type, zone, labels, or taints).

Both tools aim to bring up new nodes that can accommodate those Pending pods.

- New nodes are added, pods get scheduled

Once the new nodes are ready, the Kubernetes scheduler places the Pending pods onto them. They move from Pending → Running, and your app now has the extra capacity it needs.

- Later, when traffic drops

👉🏻 HPA scaled the replicas back down.

👉🏻 If some nodes are underutilized or completely idle, CAS or Karpenter deprovisions them.

😎 Just like that, your app and cluster scale down gracefully saving you money and keeping things lean.

Real-World Advice

- Always define proper resource requests and limits for your pods. Autoscalers rely on these to make decisions.

- Monitor Pending pods, they’re a key signal that infrastructure scaling is needed.

- Prefer Karpenter if you need fast, flexible, cost-aware node provisioning (especially for bursty workloads or varied instance types).

- Use custom metrics in HPA if CPU/memory isn’t a great indicator of app pressure (e.g., queue length, request rate, latency).

- Track scaling latency in your observability stack how long it takes from load increase to pod readiness matters.

Conclusion

Kubernetes autoscaling isn’t a black box, it’s a smart choreography between your app’s scaling needs and your cluster’s capacity to serve them. When you understand how HPA, Deployments, and CAS/Karpenter interact, you gain the power to fine-tune your platform for speed, efficiency, and reliability.

Next time you see pods stuck in Pending, you’ll know exactly what’s happening and how to fix it.😉